Moz Q&A is closed.

After more than 13 years, and tens of thousands of questions, Moz Q&A closed on 12th December 2024. Whilst we’re not completely removing the content - many posts will still be possible to view - we have locked both new posts and new replies. More details here.

PDF for link building - avoiding duplicate content

-

Hello,

We've got an article that we're turning into a PDF. Both the article and the PDF will be on our site. This PDF is a good, thorough piece of content on how to choose a product.

We're going to strip out all of the links to our in the article and create this PDF so that it will be good for people to reference and even print. Then we're going to do link building through outreach since people will find the article and PDF useful.

My question is, how do I use rel="canonical" to make sure that the article and PDF aren't duplicate content?

Thanks.

-

Hey Bob

I think you should forget about any kind of perceived conventions and have whatever you think works best for your users and goals.

Again, look at unbounce, that is a custom landing page with a homepage link (to share the love) but not the general site navigation.

They also have a footer to do a bit more link love but really, do what works for you.

Forget conventions - do what works!

Hope that helps

Marcus -

I see, thanks! I think it's important not to have the ecommerce navigation on the page promoting the pdf. What would you say is ideal as far as the graphical and navigation components of the page with the PDF on it - what kind of navigation and graphical header should I have on it?

-

Yep, check the HTTP headers with webbug or there are a bunch of browser plugins that will let you see the headers for the document.

That said, I would push to drive the links to the page though rather than the document itself and just create a nice page that houses the document and make that the link target.

You could even make the PDF link only available by email once they have singed up or some such as canonical is only a directive and you would still be better getting those links flooding into a real page on the site.

You could even offer up some HTML to make this easier for folks to link to that linked to your main page. If you take a look at any savvy infographics etc folks will try to draw a link into a page rather than the image itself for the very same reasons.

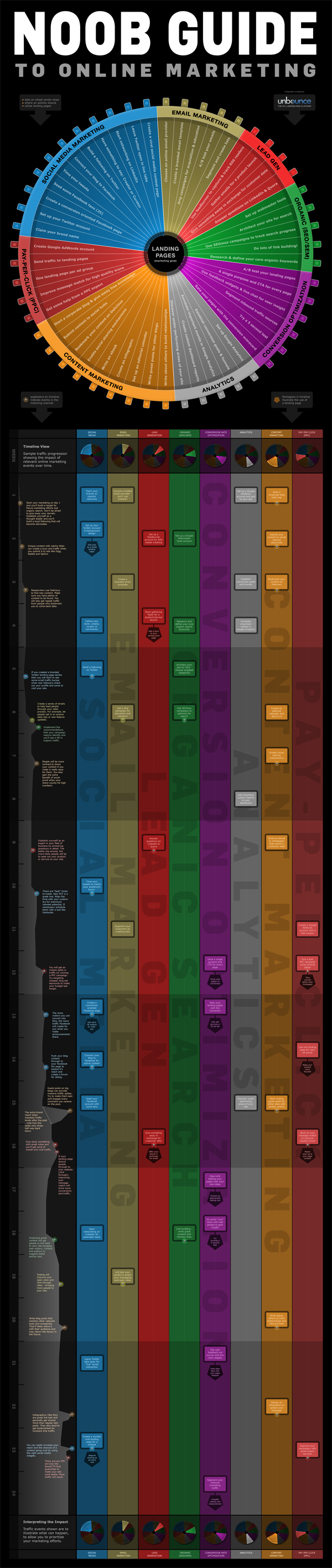

If you look at something like the Noobs Guide to Online Marketing from Unbounce then you will see something like this as the suggested linking code:

[](<strong>http://unbounce.com/noob-guide-to-online-marketing-infographic/</strong>)

[

](<strong>http://unbounce.com/noob-guide-to-online-marketing-infographic/</strong>)

](<strong>http://unbounce.com/noob-guide-to-online-marketing-infographic/</strong>)[](<strong>http://unbounce.com/noob-guide-to-online-marketing-infographic/</strong>)

Unbounce – The DIY Landing Page Platform

So, the image is there but the link they are pimping is a standard page:

http://unbounce.com/noob-guide-to-online-marketing-infographic/

They also cheekily add an extra homepage link in as well with some keywords and the brand so if folks don't remove that they still get that benefit.

Ultimately, it means that when links flood into the site they benefit the whole site rather than just promote one PDF.

Just my tuppence!

Marcus -

Thanks for the code Marcus.

Actually, the pdf is what people will be linking to. It's a guide for websites. I think the PDF will be much easier to promote than the article.I assume so anyway.

Is there a way to make sure my canonical code in htaccess is working after I insert the code?

Thanks again,

Bob

-

Hey Bob

There is a much easier way to do this and simply have your PDFs that you don't want indexed in a folder that you block access to in robots.txt. This way you can just drop PDFs into articles and link to them knowing full well these pages will not be indexed.

Assuming you had a PDF called article.pdf in a folder called pdfs/ then the following would prevent indexation.

User-agent: * Disallow: /pdfs/

Or to just block the file itself:

User-agent: *

Disallow: /pdfs/yourfile.pdf Additionally, There is no reason not to add the canonical link as well and if you find people are linking directly to the PDF then having this would ensure that the equity associated with those links was correctly attributed to the parent page (always a good thing).Header add Link '<http: www.url.co.uk="" pdfs="" article.html="">; </http:> rel="canonical"'

Generally, there are better ways to block indexation than with robots.txt but in the case of PDFs, we really don't want these files indexed as they make for such poor landing pages (no navigation) and we certainly want to remove any competition or duplication between the page and the PDF so in this case, it makes for a quick, painless and suitable solution.

Hope that helps!

Marcus -

Thanks ThompsonPaul,

Say the pdf is located at

domain.com/pdfs/white-papers.pdf

and the article that I want to rank is at

domain.com/articles/article.html

do I simply add this to my htaccess file?:

Header add Link "<http: www.domain.com="" articles="" article.html="">; rel="canonical""</http:>

-

You can insert the canonical header link using your site's .htaccess file, Bob. I'm sure Hostgator provides access to the htaccess file through ftp (sometimes you have to turn on "show hidden files") or through the file manager built into your cPanel.

Check tip #2 in this recent SEOMoz blog article for specifics:

seomoz.org/blog/htaccess-file-snippets-for-seosJust remember too - you will want to do the same kind of on-page optimization for the PDF as you do for regular pages.

- Give it a good, descriptive, keyword-appropriate, dash-separated file name. (essential for usability as well, since it will become the title of the icon when saved to someone's desktop)

- Fill out the metadata for the PDF, especially the Title and Description. In Acrobat it's under File -> Properties -> Description tab (to get the meta-description itself, you'll need to click on the Additional Metadata button)

I'd be tempted to build the links to the html page as much as possible as those will directly help ranking, unlike the PDF's inbound links which will have to pass their link juice through the canonical, assuming you're using it. Plus, the visitor will get a preview of the PDF's content and context from the rest of your site which which may increase trust and engender further engagement..

Your comment about links in the PDF got kind of muddled, but you'll definitely want to make certain there are good links and calls to action back to your website within the PDF - preferably on each page. Otherwise there's no clear "next step" for users reading the PDF back to a purchase on your site. Make sure to put Analytics tracking tags on these links so you can assess the value of traffic generated back from the PDF - otherwise the traffic will just appear as Direct in your Analytics.

Hope that all helps;

Paul

-

Can I just use htaccess?

See here: http://www.seomoz.org/blog/how-to-advanced-relcanonical-http-headers

We only have one pdf like this right now and we plan to have no more than five.

Say the pdf is located at

domain.com/pdfs/white-papers.pdf

and the article that I want to rank is at

domain.com/articles/article.pdf

do I simply add this to my htaccess file?:

Header add Link "<http: www.domain.com="" articles="" article.pdf="">; rel="canonical""</http:>

-

How do I know if I can do an HTTP header request? I'm using shared hosting through hostgator.

-

PDF seem to not rank as well as other normal webpages. They still rank do not get me wrong, we have over 100 pdf pages that get traffic for us. The main version is really up to you, what do you want to show in the search results. I think it would be easier to rank for a normal webpage though. If you are doing a rel="canonical" it will pass most of the link juice, not all but most.

-

PDF seem to not rank as well as other normal webpages. They still rank do not get me wrong, we have over 100 pdf pages that get traffic for us. The main version is really up to you, what do you want to show in the search results. I think it would be easier to rank for a normal webpage though. If you are doing a rel="canonical" it will pass most of the link juice, not all but most.

-

Thank you DoRM,

I assume that the PDF is what I want to be the main version since that is what I'll be marketing, but I could be wrong? What if I get backlinks to both pages, will both sets of backlinks count?

-

Indicate the canonical version of a URL by responding with the

Link rel="canonical"HTTP header. Addingrel="canonical"to theheadsection of a page is useful for HTML content, but it can't be used for PDFs and other file types indexed by Google Web Search. In these cases you can indicate a canonical URL by responding with theLink rel="canonical"HTTP header, like this (note that to use this option, you'll need to be able to configure your server):Link: <http: www.example.com="" downloads="" white-paper.pdf="">; rel="canonical"</http:>Google currently supports these link header elements for Web Search only.

You can read more her http://support.google.com/webmasters/bin/answer.py?hl=en&answer=139394

Browse Questions

Explore more categories

-

Moz Tools

Chat with the community about the Moz tools.

-

SEO Tactics

Discuss the SEO process with fellow marketers

-

Community

Discuss industry events, jobs, and news!

-

Digital Marketing

Chat about tactics outside of SEO

-

Research & Trends

Dive into research and trends in the search industry.

-

Support

Connect on product support and feature requests.

Related Questions

-

Duplicate content due to parked domains

I have a main ecommerce website with unique content and decent back links. I had few domains parked on the main website as well specific product pages. These domains had some type in traffic. Some where exact product names. So main main website www.maindomain.com had domain1.com , domain2.com parked on it. Also had domian3.com parked on www.maindomain.com/product1. This caused lot of duplicate content issues. 12 months back, all the parked domains were changed to 301 redirects. I also added all the domains to google webmaster tools. Then removed main directory from google index. Now realize few of the additional domains are indexed and causing duplicate content. My question is what other steps can I take to avoid the duplicate content for my my website 1. Provide change of address in Google search console. Is there any downside in providing change of address pointing to a website? Also domains pointing to a specific url , cannot provide change of address 2. Provide a remove page from google index request in Google search console. It is temporary and last 6 months. Even if the pages are removed from Google index, would google still see them duplicates? 3. Ask google to fetch each url under other domains and submit to google index. This would hopefully remove the urls under domain1.com and doamin2.com eventually due to 301 redirects. 4. Add canonical urls for all pages in the main site. so google will eventually remove content from doman1 and domain2.com due to canonical links. This wil take time for google to update their index 5. Point these domains elsewhere to remove duplicate contents eventually. But it will take time for google to update their index with new non duplicate content. Which of these options are best best to my issue and which ones are potentially dangerous? I would rather not to point these domains elsewhere. Any feedback would be greatly appreciated.

Intermediate & Advanced SEO | | ajiabs0 -

Woocommerce SEO & Duplicate content?

Hi Moz fellows, I'm new to Woocommerce and couldn't find help on Google about certain SEO-related things. All my past projects were simple 5 pages websites + a blog, so I would just no-index categories, tags and archives to eliminate duplicate content errors. But with Woocommerce Product categories and tags, I've noticed that many e-Commerce websites with a high domain authority actually rank for certain keywords just by having their category/tags indexed. For example keyword 'hippie clothes' = etsy.com/category/hippie-clothes (fictional example) The problem is that if I have 100 products and 10 categories & tags on my site it creates THOUSANDS of duplicate content errors, but If I 'non index' categories and tags they will never rank well once my domain authority rises... Anyone has experience/comments about this? I use SEO by Yoast plugin. Your help is greatly appreciated! Thank you in advance. -Marc

Intermediate & Advanced SEO | | marcandre1 -

H3 Tags - Should I Link to my content Articles- ? And do I have to many H3 tags/ Links as it is ?

Hello All, On my ecommerce landing pages, I currently have links to my products as H3 Tags. I also have useful guides displayed on the page with links useful articles we have written (they currently go to my news section). I am wondering if I should put those article links as additional H3 tags as well for added seo benefit or do I have to many tags as it is ?. A link to my Landing Page I am talking about is - http://goo.gl/h838RW Screenshot of my h1-h6 tags - http://imgur.com/hLtX0n7 I enclose screenshot my guides and also of my H1-H6 tags. Any advice would be greatly appreciated. thanks Peter

Intermediate & Advanced SEO | | PeteC120 -

Tabs and duplicate content?

We own this site http://www.discountstickerprinting.co.uk/ and just a little concerned as I right clicked open in new tab on the tab content section and it went to a new page For example if you right click on the price tab and click open in new tab you will end up with the url

Intermediate & Advanced SEO | | BobAnderson

http://www.discountstickerprinting.co.uk/#tabThree Does this mean that our content is being duplicated onto another page? If so what should I do?0 -

Problems with ecommerce filters causing duplicate content.

We have an ecommerce website with 700 pages. Due to the implementation of filters, we are seeing upto 11,000 pages being indexed where the filter tag is apphended to the URL. This is causing duplicate content issues across the site. We tried adding "nofollow" to all the filters, we have also tried adding canonical tags, which it seems are being ignored. So how can we fix this? We are now toying with 2 other ideas to fix this issue; adding "no index" to all filtered pages making the filters uncrawble using javascript Has anyone else encountered this issue? If so what did you do to combat this and was it successful?

Intermediate & Advanced SEO | | Silkstream0 -

International SEO - cannibalisation and duplicate content

Hello all, I look after (in house) 3 domains for one niche travel business across three TLDs: .com .com.au and co.uk and a fourth domain on a co.nz TLD which was recently removed from Googles index. Symptoms: For the past 12 months we have been experiencing canibalisation in the SERPs (namely .com.au being rendered in .com) and Panda related ranking devaluations between our .com site and com.au site. Around 12 months ago the .com TLD was hit hard (80% drop in target KWs) by Panda (probably) and we began to action the below changes. Around 6 weeks ago our .com TLD saw big overnight increases in rankings (to date a 70% averaged increase). However, almost to the same percentage we saw in the .com TLD we suffered significant drops in our .com.au rankings. Basically Google seemed to switch its attention from .com TLD to the .com.au TLD. Note: Each TLD is over 6 years old, we've never proactively gone after links (Penguin) and have always aimed for quality in an often spammy industry. **Have done: ** Adding HREF LANG markup to all pages on all domain Each TLD uses local vernacular e.g for the .com site is American Each TLD has pricing in the regional currency Each TLD has details of the respective local offices, the copy references the lacation, we have significant press coverage in each country like The Guardian for our .co.uk site and Sydney Morning Herlad for our Australia site Targeting each site to its respective market in WMT Each TLDs core-pages (within 3 clicks of the primary nav) are 100% unique We're continuing to re-write and publish unique content to each TLD on a weekly basis As the .co.nz site drove such little traffic re-wrting we added no-idex and the TLD has almost compelte dissapread (16% of pages remain) from the SERPs. XML sitemaps Google + profile for each TLD **Have not done: ** Hosted each TLD on a local server Around 600 pages per TLD are duplicated across all TLDs (roughly 50% of all content). These are way down the IA but still duplicated. Images/video sources from local servers Added address and contact details using SCHEMA markup Any help, advice or just validation on this subject would be appreciated! Kian

Intermediate & Advanced SEO | | team_tic1 -

How do I geo-target continents & avoid duplicate content?

Hi everyone, We have a website which will have content tailored for a few locations: USA: www.site.com

Intermediate & Advanced SEO | | AxialDev

Europe EN: www.site.com/eu

Canada FR: www.site.com/fr-ca Link hreflang and the GWT option are designed for countries. I expect a fair amount of duplicate content; the only differences will be in product selection and prices. What are my options to tell Google that it should serve www.site.com/eu in Europe instead of www.site.com? We are not targeting a particular country on that continent. Thanks!0 -

Capitals in url creates duplicate content?

Hey Guys, I had a quick look around however I couldn't find a specific answer to this. Currently, the SEOmoz tools come back and show a heap of duplicate content on my site. And there's a fair bit of it. However, a heap of those errors are relating to random capitals in the urls. for example. "www.website.com.au/Home/information/Stuff" is being treated as duplicate content of "www.website.com.au/home/information/stuff" (Note the difference in capitals). Anyone have any recommendations as to how to fix this server side(keeping in mind it's not practical or possible to fix all of these links) or to tell Google to ignore the capitalisation? Any help is greatly appreciated. LM.

Intermediate & Advanced SEO | | CarlS0